|

IQR eCQM Requirements

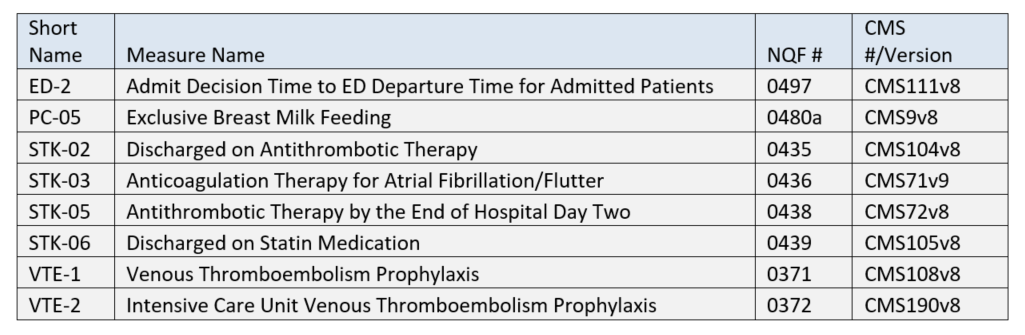

The reporting period continues to be one self-selected quarter of the 2020 calendar year. While you are only required to submit 4 eCQMs, your EHR must be certified for all 8 eCQMs listed above.

More good news! The Quality Net site has been totally re-done. Hands-on users report that the new site is much easier to use than the old.

Joint Commission

ePC-02 (Cesarean Birth)

Numerator = Nulliparous women with a term, singleton baby in a vertex position delivered by cesarean birth

Denominator = Inpatient hospitalizations for nulliparous patients delivered of a live term singleton newborn >= 37 weeks' gestation

Any DHIT clients interested in reporting ePC-02 should let us know ASAP. |

Wednesday, January 15, 2020

2020 Hospital eCQM Reporting

Friday, January 10, 2020

MIPS/QPP 2020

Now that 2020 is here, how about 20/20 vision to focus on an

outstanding MIPS score? If that’s your

goal, we hope this blog will help! In

case you weren’t paying attention, this is the 3rd year of the

Quality Payment Program (QPP) which spawned the Merit-based Incentive Program (MIPS)

and Advanced Alternative PaymentModels (APMs). The MIPS

2020 Final Rule was published November 1st but maybe you missed

reading due to its 996 page length, the busy holiday season, etc. So here’s our synopsis – for the sake of

brevity, we’ll skip the eligibility and APM criteria and focus on requirements

for those who opt in (voluntarily or kicking and screaming!) for MIPS/QPP.

Overall Scoring and Financial Incentives

- Quality: 45%, Cost 15%, Promoting Interoperability (PI) 25%, Improvement Activities (IA) 15% -- these are all the same as last year and the weighting is unchanged, although CMS is mandated to make all categories 30% by 2022.

- The Performance Threshold increased from 30 points to 45 points. For clinicians, this means you’ll have to score at least 45 points to avoid a penalty. Conversely, the exceptional performance threshold increased from 70 to 85 points.

- Greater financial penalties and rewards – non-participants get dinged 9%. Theoretically, bonuses could also be up to 9% but probably won’t be that high due to budget neutrality requirements.

- Reminder that starting with 2018 (Year 2) data, MIPS scores will be publicly reported on the Physician Compare website.

Quality Performance Category

- Medicare Part B Claims measures: 70% sample of Medicare Part B patients (up from 60%) for the performance period

- QCDR measures, MIPS CQMs, and eCQMs: 70% sample of clinician's or group's patients (up from 60%) across all payers – no “cherry picking” is allowed.

- Driven by the Meaningful Measures initiative, CMS removed 42 measures (quality ID #’s): 046, 51, 68, 91, 109, 131, 160, 165, 166, 179, 192, 223, 255, 262, 271, 325, 328, 329, 330, 343, 345, 346, 347, 352, 353, 361, 362, 371, 372, 388, 403, 407, 411, 417, 428, 442, 446, 449, 454, 456, 467 and 474. Of these, 4 were eCQMs: 160, 192, 371 and 372.

- On page 63214 of the Federal Register Final Rule, there is a table of recommended CQMs by medical specialty. I don’t normally endorse reading the Federal Register but this is a valuable resource. The following specialty areas were added for 2020:

o

Audiology

o

Clinical Social Work

o

Chiropractic Medicine

o

Endocrinology

o

Nutrition/Dietician

o

Pulmonology

o

Speech Language Pathology

Improvement Activities Performance Category

- There are 2 new IAs, 7 modified IAs and 15 that were removed.

- Page 63514 of the Federal Register has a table of all 2020 IAs.

- For groups, the threshold re. number of clinicians for an IA has increased from just 1 to at least 50% of the group for any continuous 90-day period.

Promoting Interoperability Performance Category

- The optional Query of PDMP measure will require a Yes/No response instead of a numerator/denominator.

- The optional Query of PDMP measure has been removed.

Cost Performance Category

- There are 10 new episode-based measures:

Measure

|

Episode Measure Type

|

Acute Kidney

Injury Requiring New Inpatient Dialysis

|

Procedural

|

Elective Primary

Hip Arthroplasty

|

Procedural

|

Femoral or

Inguinal Hernia Repair

|

Procedural

|

Hemodialysis Access

Creation

|

Procedural

|

Inpatient Chronic

Obstructive Pulmonary Disease (COPD) Exacerbation

|

Acute inpatient medical condition

|

Lower Gastrointestinal Hemorrhage (applies to

groups only)

|

Acute inpatient

medical condition

|

Lumbar Spine

Fusion for Degenerative Disease, 1-3 Levels

|

Procedural

|

Lumpectomy Partial Mastectomy, Simple Mastectomy

|

Procedural

|

Non-Emergent Coronary Artery Bypass Graft (CABG)

|

Procedural

|

Renal or Ureteral Stone Surgical Treatment

|

Procedural

|

To Summarize: CMS has once again raised the

bar and the financial stakes, so we recommend starting your MIPS/QPP planning

ASAP and monitoring scores regularly throughout the reporting year. Our Dynamic Registry platform has great tools

for this and is available with or without contracting for our services as a QPP Qualified Registry.

Tuesday, July 16, 2019

Da Vinci Connectathon and the Challenge of eCQM Data Capture

Dynamic Health IT (DHIT) was in attendance for the Da Vinci

FHIR Connectathon hosted by GuideWell (parent company of Florida Blue) in

Jacksonville Florida. For those of you

not familiar, the Da Vinci Project is a collaboration of trail-blazers in the

healthcare space who are looking to revolutionize information sharing. It is a private sector initiative comprised

of experts from some of the largest and most prestigious payer, provider and

vendors in the healthcare marketplace.

Their goal is to accelerate the adoption of HL7® FHIR® as the standard

to support and integrate data exchange for value-based care (VBC) with a focus

on provider/payer data exchange.

The Connectathon featured three different tracks. We participated in the Clinical Reasoning

Track, which focuses on exploring the use of FHIR to calculate Clinical Quality

Measures (CQMs). As we’ve contributed to this track, we’ve also increased our

understanding of issues related to migrating to FHIR-based CQMs. For DHIT, this

means the integration of separate products - Dynamic FHIR Server with

CQMsolution.

As it stands today, FHIR data types are not used in the

calculation of eCQMs. (eCQMs are the Clinical Quality Measures generated

directly from EHR data with no intervening manual abstracting process). But Da

Vinci members are looking to obsolete the Quality Data Model (QDM) data

elements currently used for calculation and presentation in the QRDA Cat I

files and replace them with FHIR. A FHIR

Measure Report could replace the QRDA Cat I and QRDA Cat III files. FHIR also

offers new operations that instruct the FHIR server to perform measure

calculation. Tangentially, DHIT has

explored the use of the 2.1 CCDA as a data source for calculating eCQMs (more

on that later).

The Clinical Reasoning Track is evolving as well. Previous

iterations used "normal" QDM-based eCQMs but relied on FHIR patient

data that was converted using QDM to QI Core Mappings. Developers have been

hard at work doing a trial run to populate existing eCQMs by using FHIR data

types. This will make it possible to run FHIR-based eCQMs against FHIR data

elements.

DaVinci has expanded this track include additional

operations related to submitting and collecting data. These include the

submission of data from the producer to the consumer. In this scenario, an EHR might submit data directly

to a Payer. Additionally, consumers can request data from the producer using a

“Collect Data” operation or subscribe to the producer's Subscription Service to

be notified when CQM data becomes available. All of these operations limit the scope of

data collected to what is required by the measures.

During this Connectathon we focused on one CQM - Venous

Thromboembolism Prophylaxis (VTE-1). Some of the issues we faced were the sheer

scope of changes required to begin this process. For example, the version of the CQL language

itself differs between the current CMS version of the measure and the newly

released FHIR version of the measure. In

the end Dynamic Health IT was able to make considerable progress towards the

goal of the track.

Back to

the challenge of using the 2.1 CCDA as a data source for calculating

eCQMs: Based on our research to-date, many current eCQMs cannot be

accurately calculated from standard 2.1 CCDA data elements. NCQA offers an eCQM

certification process that involves calculating quality measures from CCDA

documents but it only includes a subset of the MIPS/QPP eCQMs and some are

older definitions. Why is this? We suspect it is because the remainder are

problematic to calculate from a CCDA.

There

are several reasons for this:

- Many measures include exceptions and exclusions and these are not captured in a standard CCDA. For example, skipping a Breast Cancer screening for a woman with bi-lateral mastectomies would be an exclusion. “Patient refused to get a flu shot” would be an exception. Calculating eCQMs without exceptions and exclusions is possible but will result in an inaccurate and lower score.

- A typical 2015 Edition Certified 2.1 CCDA (the kind produced by most EHRs) lacks a standard representation for the Adverse Event used by CMS347v2 (Statin Therapy).

- Some measures call for Assessments but there's no standard way to convey the result of the Assessment in the CCDA.

DHIT’s

flagship product ConnectEHR is certified for CCDA creation. We are currently

working with HL7 to expand the CCDA data elements to accommodate more of the

eCQM measure requirements. Most current adjustment to CCDA is related to

negation. There are also other issues relate to using a CCDA as a data source, such

as values being provided as free-text, rather than being codified and different

EHR systems capturing the same clinical values/events in different ways. Despite these obstacles, we continue to

pursue the eCQM data capture challenge and look forward to participating

in the next Connectathon in Atlanta.

Hope to see you there!

Wednesday, May 15, 2019

2019 MIPS – What You Need to Know

You’ve completed 2018 MIPS – everything is submitted and

filed away. Time to relax?

Well you certainly deserve some R&R but don’t lose sight of the upcoming MIPS challenges and opportunities for 2019 reporting year. Increasingly, MIPS success will mean a year-round focus as CMS ratchets down on scoring thresholds and imposes greater penalties for weak and non-performers. Here is our roundup of changes that will present challenges and opportunities in the upcoming year.

Well you certainly deserve some R&R but don’t lose sight of the upcoming MIPS challenges and opportunities for 2019 reporting year. Increasingly, MIPS success will mean a year-round focus as CMS ratchets down on scoring thresholds and imposes greater penalties for weak and non-performers. Here is our roundup of changes that will present challenges and opportunities in the upcoming year.

Opportunities

· By June of 2019, CMS will have digested and

posted MIPS scores in a patient-friendly format on the Medicare

Physician Compare website.

The site will have a new hyperlink indicating “Performance information

available”. This “Performance

information” is derived from MIPS scoring and may be used not just by patients

and prospective patients but by any other interested parties. So, even though your practice may provide

excellent patient care, a sub-standard MIPS score could drag you down.

·

Strong performers can submit both as group and

individual and then choose the highest score. Eligible Clinicians now include Physical therapist, Occupational therapists, Qualified speech-language pathologists, Qualified audiologists, Clinical Psychologists, and Registered dieticians/nutrition professionals.

·

eCQMs, Promoting Interoperability

and Improvement Activities (details below) can now all be submitted via the new

QPP API, eliminating the old manual upload process.

Challenges

·

2015

Certified software must be in place during the entire reporting period,

although it is permissible for the certification to happen after the start of

the reporting period, as long as it is prior to the end of the reporting

period. 2014 Certified software is no longer acceptable for 2019 reporting.

·

To

avoid a penalty, the minimum score is 30 points as opposed to 15 points in 2018.

Likewise, the exceptional performance bonus threshold is up from 70 points to

75 points.

·

The

4 categories from 2018 remain but some percentages have been adjusted for 2019:

1. Quality 45% (decrease of 5%)

·

Minimum

of 6 measures for 1 year

·

1

must be an outcome or High Priority Measures (awarded higher points)

·

Bonus

points awarded if you choose the same measure and show improvement from 2018

·

Avoid

topped out measures, since scoring is capped at a maximum of 7 points

·

On

the overall list of Quality Measures, 26 were removed and 8 were added 8 (6 of

which are high priority -- see chart below)

·

Of

the 26 and 8, some eCQMs changed:

o CMS 249 and CMS 349 have been

added

o CMS 65, CMS 123, CMS 158, CMS

164, CMS 167, CMS 169 were removed

o CMS166 -previously for

Medicaid-only submission – has been phased out.

·

New

for 2019: CMS will aggregate eCQMs collected through multiple collection types;

if the same measure is collected, the greatest number of measure achievement points

will be awarded.

Measure

ID

|

eCQM

ID

|

New

Measures for 2019:

Name

|

Measure

Type

|

468

|

None

|

Continuity of

Pharmacotherapy for Opioid Use Disorder

|

High Priority

|

469

|

None

|

Average Change in

Functional Status Following Lumbar Spine Fusion Surgery

|

High Priority

|

470

|

None

|

Average Change in

Functional Status Following Total Knee Replacement Surgery

|

High Priority

|

471

|

None

|

Average Change in

Functional Status Following Lumbar Discectomy Laminectomy Surgery

|

High Priority

|

472

|

CMS249v1

|

Appropriate Use of

DXA Scans in Women Under 65 Years Who Do Not Meet the Risk Factor

Profile for Osteoporotic Fracture

|

High Priority

|

473

|

·

None

|

·

Average

Change in Leg Pain Following Lumbar Spine Fusion Surgery

|

High Priority

|

474

|

None

|

Zoster (Shingles)

Vaccination

|

Process

|

475

|

CMS349v1

|

HIV Screening

|

Process

|

2. Promoting Interoperability

25%

·

Minimum

of 90 days

·

Only

one set of objectives & measures (reduced from 2 in 2018)

·

4

Objectives include: e-Prescribing, Health Info Exchange, Provider to Patient

Exchange and Public Health & Clinical Data Exchange

·

50

point “base value”/bonus from 2018 has been removed

3. Improvement Activities 15%

·

Minimum

of 90 days

·

Added

6, Modified 5, removed 1 = 118 total Improvement Activities

·

Bonus

removed

4. Cost 15% (increase of 5%)

·

1

year

·

No

actual submission

Deadlines

·

Groups

must register by June 30.

·

Submission

Deadline: March 31, 2020

The bottom line (in our opinion): Don’t wait until the year is over to take

action to improve your MIPS score.

Remember, the bar is set higher for 2019 and the financial incentives

and penalties are also greater.

Subscribe to:

Posts (Atom)